On Emergency Leave on 9/11

In September of 2001, I had been on active duty in the US Navy for 6 years. I was stationed with the World-Famous Golden Dragons of VFA-192 in Atsugi, Japan, running the night shift of the Integrated Weapons Team. On the morning of September 11th, 2001, I was in South Carolina on emergency leave from my squadron-mates because my mother was in intensive care. It was already a traumatic time in my life before the news of the Twin Towers woke me from my sleep. A few minutes later, my division officer called to see how much time I needed before getting on the first plane back to Japan. I fully expected to join my squadron on another tour of the Persian Gulf on the USS Kitty Hawk and extend my tour overseas. I had no idea how much my life was about to change and how different it would be from what I had planned.

Quantum Leap into a New Life

In the following weeks, I would get back to Japan, meet my future wife, receive orders to the US Naval Postgraduate School (NPS), pack my seabag and wake up in Monterey, California, before I could take a breath. The US Department of Defense was in a rapid pace of transition in response to the terrorist attacks on September 11th. One of the key areas that were identified as needing attention was cybersecurity operations (SECOPS.) In a series of strange circumstances, it fell to me to figure out how to implement SECOPS at NPS. Coming from Naval Aviation, I was extremely disturbed by the lack of guidance and documentation in SECOPS. There were no pubs, workflows, or QA. I had seven sailors working with me and I struggled to figure out how to give them tasks they could carry out with their skillsets.

Building SECOPS on Law Enforcement and Aviation

One of the fortuitous decisions that occurred in the formation of my precious Network Security Group (NSG) was that it was placed under the security directorate and not IT. This meant my department meetings were populated by the military and civilian police and intelligence officers. The mindset I was seeing from my divisional peers was built on military and law enforcement models. Between what I was learning from them and what I knew from Naval Aviation, I started cobbling together workflows that my team could execute against (see: Cross Domain Insights from 2016 for details on this.)

Fisherman: 2002 Prototype of SOAR

In Naval Aviation, we used an application called NALCOMIS to track the units of work. Pilots or maintenance personnel would create maintenance action forms (MAF) and technicians would resolve the MAFs in NALCOMIS by executing flowcharts in publications.

The first thing I did was build a robust set of flowcharts. They wallpapered the workspace and were a common topic of teasing me. The next thing I did was code an ASP web application that I called Fisherman to create units of work when they were detected by an intrusion detection system (IDS), antivirus, failed logins, content filter or vulnerability management (VA) system. The unit was documented in a ticket and assigned to a responder. The responder was given a flowchart to execute and document in the ticket. Then I would inspect (QA) the work.

Teaching Computers was Easier than Teaching Humans

As I performed the inspections, I would find multiple errors by my team. I tried to teach them the correct way to execute the steps, and I tried to make the steps clearer. It was frustrating because the team didn’t have the skills to do complex investigations (because we didn’t give them that training.) While I was getting funding for training, I decided it would be easier if I could move more and more of the flowcharts into Fisherman and teach the computer what it needed to know. Then I would engage the team members with a smaller, more focused set of tasks to accomplish.

Playbooks: The Never-ending Story

Lucky for me as I was starting this work, Ryan Self transferred in from the USS Reagan and was hungry to improve his development chops. I sent him off to coding bootcamps and together we started pouring workflows into Fisherman.

Many of our flowcharts (normally called playbooks today) were about protecting against various types of insider threats. We were at a military base with exchange students and we were conducting sensitive research (I’ll let you fill in the Ian Fleming implications yourself.) The problem Ryan and I discovered was the faster we would implement playbooks, the faster the adversary would work around them. The flowcharts became increasingly complex, as well as harder and harder to maintain or explain to the team.

Blowing up a Research Grant

We didn’t realize it at the time, but the complexity of the playbooks was creating vulnerabilities ripe for exploit. When the Blaster worm hit, it was a painful experience for us. It seemed as soon as we had recovered from that nightmare, Microsoft had disclosed a vulnerability that we were certain would be exploited in similar fashion. We developed a playbook that would proactively notify those with vulnerabilities to patch with a warning we would force a patch. The users could file an exception that Fisherman used to prevent blowing up sensitive research. Then one day, the playbook pushed a patch to a system that was three months into complex modeling and blew up the model. It turned out the researcher was too stressed to check emails. It blew the research off schedule and had me standing at attention for a painful amount of time while I was ferociously dressed down by the brass.

Change Controls

After I blew up an 8-figure research grant with a SOAR playbook, new restrictions came into play. All playbooks were reviewed by a committee that neither understood SECOPS nor my software. This ultimately resulted in only non-intrusive playbooks being executed. I started spending more time in change control committees defending playbooks than time I was saving. I was back to minimal automation and maximizing human investigation.

Getting the Band Back Together

10 years after leaving the US Navy, we founded WitFoo to solve similar problems that I started tackling after 9/11. Ryan was crazy enough to rejoin me in those efforts. Two powerful things we had at our disposal were the lessons learned from trying to solve SECOPS with flowchart-based playbooks and radical improvements in computer science platforms.

Déjà vu Trauma

When SOAR started entering the commercial market, the products were generally built using constraints that were remarkably similar to our Fisherman efforts:

- Playbooks are built on logical flows (flow charts)

- Playbooks are reactive to a trigger

- Playbooks collect data as a reaction

- Trigger signals are generally a message from another tool

Based on the trauma Ryan and I experienced in the past, we wanted to attack the problem using a more resilient approach.

Enter Object Oriented SOAR

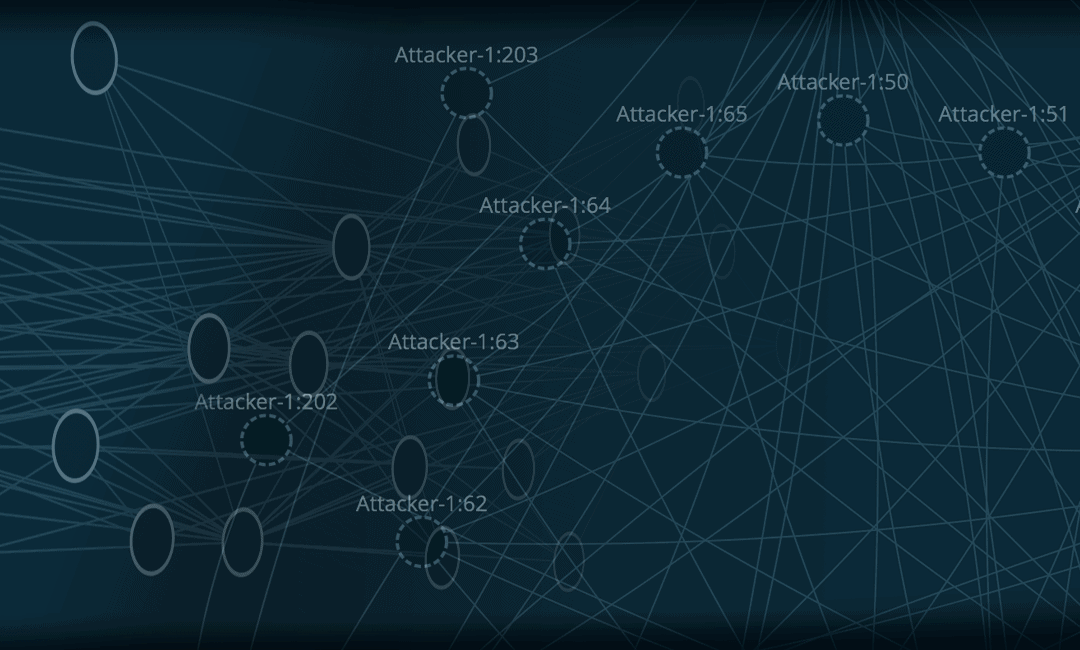

After the first four years of R&D at WitFoo, we had developed a big data architecture that allowed us to comprehend, normalize and contextualize all the data in our environments. We were maintaining state on all the hosts, users, files, emails as well as all the network connections, user sessions and presence of data. In our big data research, this led to implementing several approaches from law enforcement and Graph Theory to track all the relevant information in the context of an attack.

We realized that we could analyze the data in the same way object-oriented programming works. Instead of focusing on messages, we focus on the characteristics of the objects derived from the messages.

Solving 18 Years of SOAR Headaches

Effective SOAR cannot just understand how to execute a script, it must understand your philosophies, standards, and skills. You would not expect a human worker to just blindly execute a script without training and you should hold that standard to your computer workers. Object-oriented SOAR requires not only teaching the tactics (script), but it includes teaching all relevant information to your computer. Object-oriented SOAR evolved from our research to have the following characteristics:

- Generalized Observations replaced reactive playbooks.

- Observations happen in real-time as messages change the characteristics of the objects.

- Observations are normalized to the object attributes and not subject to product variance.

- Observations update thresholds and other attributes on objects.

- Reactive lookups are no longer necessary in analysis.

- Like physics, the cumulative changes to the object do not normally have to be in linear order.

- Maintenance of observations is extremely low (especially when compared to playbooks).

- Chance of error in analysis is also extremely low compared to low-level SOAR.

In testing we found that Object-Oriented SOAR required virtually no ongoing maintenance, it was more accurate and was easier to explain to all stakeholders.

Tactics to Philosophy

Over the last 18 years of trying to automate human work through data analysis, I have found that Object-Oriented & Functional programming approaches (when done well) have the capability to express and execute a set of philosophies that linear scripts cannot. Scripts execute tactics without philosophy governing their use. It makes them the worst kind of workers: they do exactly what you say without any comprehension of the task. By spending the time building models that honor a resilient philosophy, the need to maintain brittle and vulnerable playbooks diminishes.

Summing it Up

When I opened my eyes on September 11th, 2001, I had no idea that the next 2 decades of my life would be spent trying to merge human work, data, and computer automation into a viable triad. I also had no idea how difficult it would be to get it correct. It turns out if you want to make the computer effective at automating and orchestrating security operations, you must endow it with the same training, awareness, and philosophies you would an effective human worker. You cannot expect great, dynamic work from an employee that only understands a script and the same applies to making a great computer worker.